Traditional eye-typing interfaces can demand too much precision,

too much calibration, or hardware that is out of reach for many

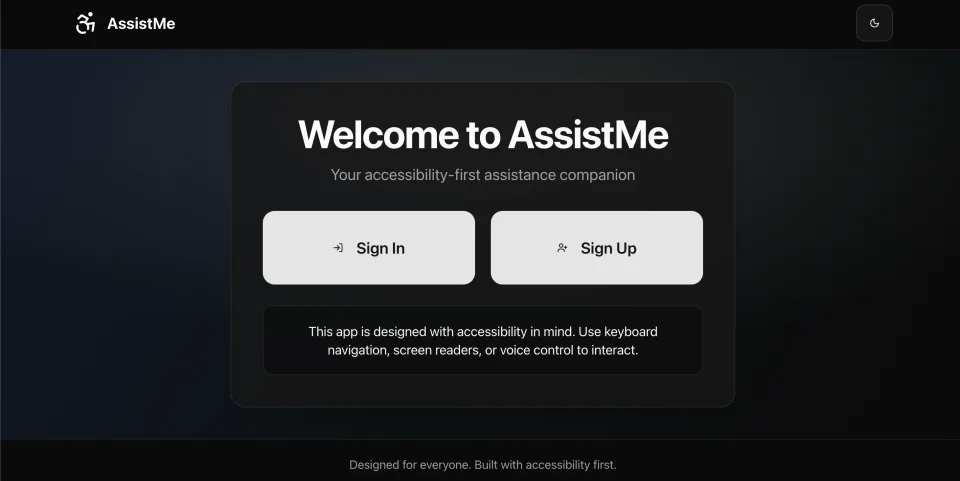

people. AssistMe explores a different path.

Instead of asking users to type precisely with their gaze, the

interface breaks choices into large regions and confirms intent

through sustained focus. That makes the interaction easier to

understand and easier to use under time pressure.

I also implemented stabilization heuristics to improve cursor

reliability and reduce jitter, plus persistent calibration storage

in PostgreSQL so returning users did not need to restart setup

from scratch.